The following is a lecture I gave to young people applying to study Management at the LSE. The aim of the lecture is to discuss why studying information systems is vital if we are to understand modern management practice.

At the start of 2014 Erik Brynjolfsson and Andrew McAfee at Sloan Management School (MIT) published a book titled “The second machine age” (Brynjolfsson and McAfee 2014); a title drawn from the idea that information technology is progressing at such a speed that digital technology is likely to reinvent our economy – just as the industrial revolution and steam engine did in the first machine age. Picking up this books’ argument The Economist used a picture of a tornado ripping through offices to illustrate how in the near future information technology is likely to reinvent all aspects of work – including management jobs.

In some ways their thesis sounds like another tired outdated argument for how wonderful Information technology is and how it will change everything. Similar pronouncements were made in the 1960s as computers began to be used for banking and travel agencies; in the 1970s as computers began to be used for office work such as publishing; in the 1980s as the Personal Computer arrived on every desk and entered the home; in the 1990s as the Internet emerged as an “Information Superhighway” connecting the worlds information; then again in the 2000’s as eCommerce, Websites and Mobile telephony exploded.

What is different today? Should we accept these authors’ pronouncements and their hyperbolic claim of a “second-machine age” today? And if we do accept it what does that mean for the study of management?

Let’s begin by considering where we are with information technology in business today.

Within modern industrialised economies most businesses already rely on a suite of information technology applications to support their activity. We cannot conceive of businesses without IT to manage all the different information flows they need.

From a small shop’s stock management system and accounting package, to a large business running thousands of applications IT is everywhere. For example AstraZenica, a global pharmaceutical company runs 2144 applications for its 50,000 users.

Large businesses like these will run applications for many different management tasks – to manage their key resources (staff, stock, warehousing etc); applications to manage their relationship with their customers (for example to manage call-centres, websites, online-orders, customer-email), supply chain management systems to manage suppliers and partners; and finance packages to undertake their accounting. They will also run specialist applications created for their particular business – for example the LSE has applications for timetabling all the classrooms and lecture halls, and applications to book halls of residences rooms for you. These complex information tasks have existed for years –indeed the “computing department” predated the computer as shown in this photo – it was a department in a company responsible for processing information – so what is different today?

If these have existed for years the how then can Brynjolfsson and McAfee justify the argument that we are entering in a second machine age?

To consider this we need to think about the role of technology in our economy more generally.

The first machine age was when factories and machines (e.g. steam engines) were create in the “industrial revolution” and in a short period the entire economy evolved and changed. Yet in 1976 Daniel Bell (Bell 1976) argued that we were a “post-industrial society” in which the number of employees in “first-machine-age” businesses were rapidly declining as computerised systems took over the role of people for repetitive manual labour (for example simple robots screwing nuts into the chassis of a car during its production). IT evolved and changed our industrial landscape during this period…

However at about the same time Peter Drucker (Drucker 1969) (a famous management guru) coined the term “Knowledge worker”, describing how knowledge was the key economic resource and so showing that the human (as central to knowledge creation and application) would continue to rule supreme for knowledge work. The argument was that information-technology and machines might replace and mechanise simple repetitive jobs (making a more efficient first-machine-age) but knowledge-work would remain and would be vital for designing, building and supporting these machines.

While a PC might get rid of the typewriter and replace it with a wordprocessor application, or get rid of the calculator and replace it with an accounting application, the knowledge-worker would continue to direct and use these machines. They were supporting knowledge work not supplanting it. Knowledge Work wouldn’t be capable of mechanisation.

This view of knowledge-work also aligned with the shifting nature of economies as labour shifted from primary industry and secondary industry (e.g. Agriculture and manufacturing) to tertiary industries (like financial services) in which information technology was increasingly vital to support the knowledge work. Essentially knowledge-workers in tertiary industries – Lawyers, Financiers, Teachers, Doctors, Managers, Designers, Architects and (thankfully for me) Academics jobs’ were safe. Information Technology might support us in our work – and so make us more productive (and perhaps even more prosperous) – but ultimately we would still be needed!

Recently though we have seen a number of significant shifts in the way information technology is used in businesses and between businesses which might challenge this assumption.

To understand this though we need to stop talking about Information Technology – and begin to talk about Information systems. Because Information technology – computers, printers, applications, smartphones, networks – are only one part of an information system.

An information system is an organised system composed of technology and people which collects, organises and processes information. It is about how people are managed and coordinated to use information technology to do some task which is useful; whether it is a shop owner looking at their past-sales on a spreadsheet in order to make decisions on what stock to buy, or something more dynamic, globally distributed, and complex.

Take for example the Lotus Formula 1 racing team (which I had the pleasure of researching last summer). One of the crucial management tasks they face is to make decisions on racing strategy during a race. To do this they have a complex information system consisting of the driver, the car (which relays data about its performance from 150 sensors every 100th of a second) the pit-crew (who can see this data in real-time as it is processed by the 36 computers it keeps “Track-side” running specialist applications), and then a secondary crew sat in an office in Oxfordshire who see the same information as the pit-crew but also have access to all the engineers who designed the car, and another supercomputer to process this data. The information system then is all of this – the people, their expertise, the task (deciding what race strategy should be taken), the technology (the car, its instruments, the computers, software, the screens, the networking etc). The management decision on race strategy is effectively taken by all these components coordinating together and its success can have profound implications for the team’s financial performance.

Less extreme Information systems are everywhere… they got you into this lecture theatre this morning (remember the people with clipboards checking your name against a list), remember the Oyster-Card you used to get the tube here, remember the booking system you used to book the hotel.

What then might have changed recently to justify the name the second-machine age, and to justify why you need to study information systems within a degree on Management?

I would like to argue that two things might justify this argument today (these are drawn from my own research rather than from Brynjolfsson and McAfee’s book: Digital Abundance and Connections and coordination.

1) Digital Abundance

Until quite recently business information systems managed information at a local level. Information was a scarce resource and mangers were employed to produce it (for example by getting people to walk around the shop floor counting things), analyse it (putting that data into a spreadsheet and deciding what was selling well) and act upon it (telling staff to order more of those things).

Today however data is everywhere and is usually created automatically (for example by shops Point of Sale (tills to you and me), supply chain management systems, and stock-tagging). Staff might walk around, but they would carry a computer and scanner and input this data directly into a huge database at head-office. But because computing storage and processing is extremely cheap today companies can go further to include other sources of information in making their decisions – for example they can process internet sourced information (Facebook, Google, Twitter) to understand how people perceive a product or brand; they can process weather information to understand likely consumption patterns, they can process all their past sales information, and they can even process data on customers – their buying patterns, habits, census data about where they live – even how they walk around a store (using their smartphone wifi or CCTV). Decision making has thus shifted from a scarcity of information to an abundance.

2) Connections and coordination

Management decision making is thus about dealing with this profusion of data and trying to identify causalities and inferences from the connections within it – and yet we are beginning to learn that this is often beyond the comprehension of humans. For example in some supermarkets Nappies and Beer are advertised together on Friday evenings. Why? Because an information system with access to all sales data noted a direct correlation between the sale of nappies and beer on Friday evenings – and from this the head-office manager can infer that Men (mostly) are asked to collect nappies on the way home from work, and if prompted will probably buy beer at the same time. This decision however would probably only have been made through an information system analysing past sales data automatically.

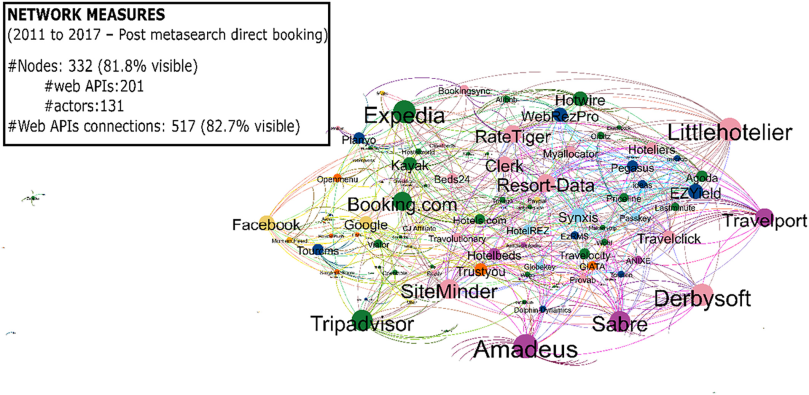

But connection is also about extending information systems beyond a single enterprise. A new innovation termed “Cloud Computing” has shifted the IT used by many organisations outside their organisation and onto the Internet. This has increasingly allowed them to create connections and networks between organisations, and build ever more profitable, and ever more complex, Information systems.

In a book I recently co-authored with two colleagues here at the LSE we termed this “moving to the cloud corporation” and describe a company called NewGrove who specialise in helping businesses by connecting and collating huge quantities of data from both within the organisation and from outside it and displaying this data as google-maps with colours, images and graphs showing how the business operated on a street by street basis. Managers with NewGrove’s information system can, arguably, make more informed decisions than competitors without such systems.

Connections and coordination are perhaps most evident at an airport – who flew here today – yesterday… How many of you didn’t really interact with a human being except at the departure gate (and perhaps the coffee stand)? Instead your passage through the airport was coordinated by a plethora of systems interacting and communicating together… in the USA you put your frequent-flier or credit card into a machine and in those 3-4 seconds a conversation occurs between various machines – flight-status systems, Airlines systems, past-travel history, name with Transportation Security Administration, NSA (perhaps) , food providers, computers in the destination, connecting flights, weight distribution on the plane – what Brian Arthur (Arthur 2011) described as “ a second economy” alongside the visible economy. Throughout complex decisions and inferences are made by software that were previously made by human beings.

However as the software and computing power improves, as more IT is available through “cloud computing” shifts, and as more data is capable of processing, so the decisions and inferences can become more complex – and appear more like Peter Drucker’s “Knowledge Work”.

In 2010 IBM built a computer system called Watson capable of winning the complex gameshow “Jeopardy” against the shows two best ever players by keeping a gigantic database of information (taken from the internet) available in its memory- 200million pages of data. Today Watson is being developed for sale as a management-support systems to work alongside physicians, lawyers, financial services and government. Note that since 2010 Watson improved its performance by 2300 percent and shrunk to the size of three pizza boxes[1]

However more importantly cloud computing allows large scale computing power to be available to any business paid-for with a credit card. The key lesson here then is not that these things are going to be amazing, but that they may become integrated into the mundane business systems central to every business today.

Finally these connections and their coordination change businesses and business models. Obviously music, TV, newspapers and photography have been fundamentally reconfigured by information technology. For example financial markets have been changed as companies harness similar data-abundance to produce computer systems that trade automatically… making complex financial decisions at huge speed through complex information systems which connect data from across the world. This was made obvious on the 5th June 2010 at 2:45pm when 9% was wiped off the Dow Jones in about 5 minutes…. Within this time it was not human traders who were leading the volume of trades, but algorithmic trading systems created to play the market by making decisions based on vast quantities of data at speeds no human can match.

Now obviously the examples I have used in this talk so far might be classed as extreme – but they hopefully paint a picture. Information Technology are no longer simply tools used by managers – Information Systems are integrated, connected, coordinated systems with an abundance of data at their disposal which are making complex management decisions already.

Knowledge-Work is not longer, Brynjolfsson and McAffee would argue, the preserve of the Human –and so we are entering a second machine age.

So what in conclusion I want to take away that:

1) Even if you don’t think you want to study computers you should ensure that you understand information systems at a basic level because they are as integral, pervasive and important as the people in a business – though often less visible. If we want to understand how businesses work from a financial or human resource perspective – we should probably do the same from an Information Systems perspectives.

2) Information Systems are part of what an organisation is and shape the way organisations evolve and change. We need to understand how to better design and build such systems, and how such systems constrain and shape businesses.

3) We must understand how we can prepare for the information systems created by others (be they competitors, partners or government) –Uber’s[2] impact on taxi-firms, TripAdvisor[3] on Hotel chains, Flikr and Facebook’s on Kodak, Amazon on retail and publishing, Google on mapping and search. When managers ignore the impact of information systems on their business, they are taking fundamental risks with their organisations future.

Thank you,

Will Venters.